Video Extrapolation - Forward in time

Extrapolated Frames (GREEN)

Video Extrapolation - Backward in time

Extrapolated Frames (GREEN)

Note how backward extrapolations of the same video yield different outputs due to the inherent stochasticity of diffusion models.

Spatial Video Retargeting

The rest are retargeted to different spatial shapes.

Temporal Upsampling

First row Input video

Second row Linear interpolation

Third row: SinFusion (Ours) interpolation

Fourth: RIFE [1] interpolation

Note that :

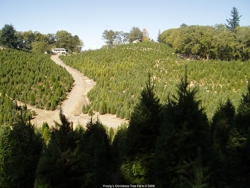

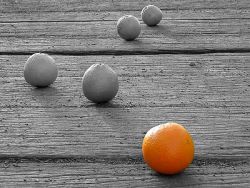

Diverse Image Generation

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|